Next-Generation Secure Computing Base

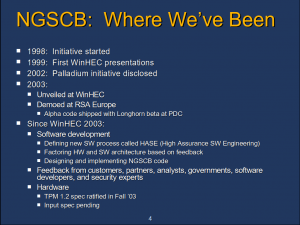

The Next-Generation Secure Computing Base (NGSCB), codenamed Palladium[1] (Pd)[2] was[3] a hardware and software[2][4][5] architecture originally slated to be included in the Microsoft Windows "Longhorn" operating system. Development of the architecture began in 1997.[6][7]

NGSCB relied on new software components and specially designed hardware[2][8] to create a protected memory space[9][10] for applications and services with higher security requirements.[11] Microsoft's primary stated objective with NGSCB was to "protect software from software."[2][4][12]

Upon the announcement of its existence in 2002 under the name "Palladium", reception to NGSCB was mostly negative. Concerns incuded software lock-in and monopolies,[13][14][15][16] loss of control over information,[13][16][17] and the imposition of digital rights management technology in favor of the music and film industries.[18][19][16][20][17]

Microsoft revised NGSCB in WinHEC 2004, before announcing that only one part of it, the Bitlocker drive encryption function, would ship with Windows Vista.

History

Name

In Greek and Roman mythology, the term "palladium" refers to an object that the safety of a city or nation was believed to be dependent upon.[21]

On 24 January 2003, Microsoft announced that "Palladium" had been renamed as the "Next-Generation Secure Computing Base." According to NGSCB product manager Mario Juarez, the new name was chosen not only to reflect Microsoft's commitment to the technology in the upcoming decade, but also to avoid any legal conflict with an unnamed company that had already acquired the rights to the Palladium name. Juarez acknowledged that the previous name had been a source of criticism, but denied that the decision was made by Microsoft in an attempt to deflect criticism.[1]

Pre-Microsoft

The idea of creating an architecture where software components can be loaded in a known and protected state predates the development of NGSCB.[22] A number of attempts were made in the 1960s and 1970s to produce secure computing systems,[23][24] with variations of the idea emerging in more recent decades.[25][26]

In 1999, the Trusted Computing Platform Alliance, a consortium of various technology companies, was formed in an effort to promote trust in the PC platform.[27] The TCPA would release several detailed specifications for a trusted computing platform with focus on features such as code validation and encryption based on integrity measurements, hardware based key storage, and attestation to remote entities. These features required a new hardware component designed by the TCPA called the Trusted Platform Module (referred to as a Security Support Component,[28] Secure Cryptographic Processor, or Security Support Processor[2] in earlier Microsoft documentation). While most of these features would later serve as the foundation for Microsoft's NGSCB architecture, they were different in terms of implementation.[6] The TCPA was superseded by the Trusted Computing Group in 2003.[29]

Microsoft

Development of NGSCB began in 1997 after Microsoft developer Peter Biddle conceived of new ways to protect content on personal computers.[8] It was initially conceived as a way of distributing music and film with digital rights management (DRM).[13] Bill Gates was quoted as saying:

"It's a funny thing," says Bill Gates. "We came at this thinking about music, but then we realized that e-mail and documents were far more interesting domains."

— Steven Levy, The Big Secret, [13]

Microsoft filed a number of patents related to elements of the NGSCB design.[30] Patents for a digital rights management operating system,[22] loading and identifying a digital rights management operating system,[31] key-based secure storage,[32] and certificate based access control[33] were filed on January 8, 1999. A method to authenticate an operating system based on its central processing unit was filed on March 10, 1999.[34] Patents related to the secure execution of code[35] and protection of code in memory[36] were filed on April 6, 1999.

During its Windows Hardware Engineering Conference 2000 (WinHEC 2000), Microsoft showed a presentation titled Privacy, Security, and Content in Windows Platforms which focused on the protection of end user privacy and intellectual property.[37] The presentation mentioned turning Windows into a "platform of trust" designed to protect the privacy of individual users.[37] Microsoft made a similar presentation during WinHEC 2001.[38]

NGSCB was publicly unveiled under the name "Palladium" on 24 June 2002 in an article by Steven Levy of Newsweek.[13][18][39] (For more details, see Reception.)

In August 2002, Microsoft posted a recruitment advertisement seeking a group program manager to provide vision and industry leadership in the development of several Microsoft technologies, including its NGSCB architecture.[40]

Encrypted memory was once considered for NGSCB, but the idea was later discarded as the only threat conceived of that would warrant its inclusion was the circumvention of digital rights management technology.[41][42]

In 2003, Microsoft publicly demonstrated NGSCB for the first time at its WinHEC 2003[43][44][45] and released a developer preview of the technology later that year during its Professional Developers Conference.[46][47][48] (See In builds of Windows "Longhorn".)

In PDC 2003, Microsoft announced that NGSCB would ship as part of "Longhorn", and that betas and other releases would be in sync with and delivered with "Longhorn". Version 1 of NGSCB would have focused on enterprise applications. Example opportunities were document signing, secure instant messaging, internal applications for viewing secure data, and secure e-mail plug-in.[5]

During WinHEC 2004, Microsoft announced that it would revise the technology in response to feedback from customers and independent software vendors who stated that they did not want to rewrite their existing programs in order to benefit from its functionality.[50][51] After the announcement, some reports stated that Microsoft would cease development of the technology.[52][53] (Notably, it was reported that the NGSCB code would not be updated in the Longhorn developer preview that would come out in WinHEC 2004. See In builds of Windows "Longhorn"[53]) Microsoft denied the claims and reaffirmed its commitment to delivering the technology.[54][55] Later that year, Microsoft's Steve Heil stated that the company would make additional changes to the technology based on feedback from the industry.[56]

In 2005, Microsoft's lack of updates on its progress with NGSCB had led some in the industry to speculate that it had been cancelled.[57] At the annual Microsoft Management Summit event, then Microsoft CEO Steve Ballmer said that the company was building on the foundation it had started with NGSCB to create a new set of hypervisor technologies for its Windows operating system.[58] During WinHEC 2005, Microsoft announced that it was delivering Secure Startup, the first part of NGSCB, with the "Longhorn" launch. Secure Startup would protect users against offline attacks, blocking access to the computer if the content of the hard drive is compromised. This prevents a laptop thief from booting up the system from a floppy disk to circumvent security features or swapping out the hard drive.[59][60] (See Legacy.)

The Gartner Group said in May 2005 that NGSCB was morphing to server virtualization.[58][61] Microsoft stated that it planned to deliver other aspects of its NGSCB architecture at a later date.[62]

Reception

Criticism

Steven Levy, who first reported about "Palladium" on Newsweek, was skeptical of the platform:

The first adopters will probably be in financial services, health care and government--places where security and privacy are mandated. Then will come big corporations, where information-technology managers will find it easier to control and protect their networks. (Some employees may bridle at the system's ability to ineluctably log their e-mail, Web browsing and even instant messages.) "I have a hard time imagining that businesses wouldn't want this," says Windows czar Jim Allchin.

Finally, when tens of millions of the units are in circulation, Microsoft expects a flood of Palladium-savvy applications and services to spring up--that's when consumers will join the game.

None of this is a cinch. One hurdle is getting people to trust Microsoft. To diffuse the inevitable skepticism, the Redmondites have begun educational briefings of industry groups, security experts, government agencies and civil-liberties watchdogs. Early opinion makers are giving them the benefit of the doubt. "I'm willing to take a chance that the benefits are more than the potential downside," says Dave Farber, a renowned Internet guru. "But if they screw up, I'll squeal like a bloody pig." Microsoft is also publishing the system's source code. "We are trying to be transparent in all this," says Allchin.

Others will note that the Windows-only Palladium will, at least in the short run, further bolster the Windows monopoly. In time, says Microsoft, Palladium will spread out. "We don't blink at the thought of putting Palladium on your Palm... on the telephone, on your wristwatch," says software architect Brian Willman.

And what if some government thinks that Palladium protects information too much? So far, the United States doesn't seem to have a problem, but less tolerant nations might insist on a "back door" that would allow it to wiretap and search people's data. There would be problems in implementing this, um, feature.

Other potential snags: will Microsoft make it easy enough for people to use? Will someone make a well-publicized crack and destroy confidence off the bat? "I firmly believe we will be shipping with bugs," says Paul England. Don't expect wonders until version 2.0. Or 3.0. Ultimately, Palladium's future defies prediction. Boosting privacy, increasing control of one's own information and making computers more secure are obviously a plus. But there could be unintended consequences. What might be lost if billions of pieces of personal information were forever hidden? Would our ability to communicate or engage in free commerce be restrained if we have to prove our identity first? When Microsoft manages to get Palladium in our computers, the effects could indeed be profound. Let's hope that in setting the policies for its use, we keep in mind the key attribute of the woman embodied in the first Palladium. Athena was the goddess of wisdom.

— Steven Levy, The Big Secret, [13]

The response to the "Palladium" announcement was negative. Rob of geek.com wrote:

ah, microsoft. it's gotten so much wrong on security for years, and now we are expected to believe that this “solution” is the only way? you've got to be kidding me. by promising digital rights management and privacy for consumers, microsoft has found a socially acceptable way to introduce drm for the riaas and mpaas of corporate america. if this palladium architecture is embraced, i still don't think that it will fix anything.

the only good thing about it is the potential for a dedicated encryption/decryption dsp chip in all pcs. i'd be happy to have a pc that encrypts and decrypts at real-time speeds without requiring a top of the line processor. using such encryption by default to encrypt my instant messenger communications, temporary internet files, browser history, and other things would make me feel more secure. otherwise, i'm not planning to submit my fingerprint to my microsoft-pc each time i log in, and i'm not going to feel “safe” about sending out overly sensitive or perhaps harassing e-mails to users that can't be forwarded to others.

i think the basic premise of palladium is flawed, as well as the assumption that the government will be an early adopter. all of the problems discussed can be worked around with intelligent software programming and user education. to stop spam, microsoft could add a more advanced filtering service to outlook that would protect our privacy, instead of requiring us to submit data to our pc to prove who we are.

palladium may catch on, but only the most uninformed users will unknowingly embrace it.

— rob's opinion, Palladium: Microsoft’s big plan for the PC, [18]

Robert X. Cringely opined:

What bothers me the most about it is not just that we are being sold a bill of goods by the very outfit responsible for making possible most current Internet security problems. "The world is a fearful place (because we allowed it to be by introducing vulnerable designs followed by clueless security initiatives) so let us fix it for you." Yeah, right. Yet Palladium has a very real chance of succeeding.

How long until only code signed by Microsoft will be allowed to run on the platform? It seems that Microsoft is trying to implement a system that will enable them, once and for all, to charge game console-like royalties to software developers.

But how will this stop the "I just e-mailed you a virus" problem? How does this stop my personal information being sucked out of my PC using cookies? It won't. Solving those particular problems is not Palladium's real purpose, which is to increase Microsoft's market share. It is a marketing concept that will be sold as the solution to a problem. It won't really work.

Let's understand here that not all Microsoft products are bad and many are very good. Those products serve real customer needs and do so with genuine purpose, not marketing artifice. But Palladium isn't that way at all. This is NOT about making things better for the user. This is about removing the ability for the end user to make decisions about how his or her computer functions. It is an effort by Microsoft to take literal ownership of Internet technology, Microsoft's "embrace and extend" strategy applied for the Nth time, though on a grander scale than we've ever seen before. While there is some doubt that the PC will survive a decade from now as a product category, nobody is suggesting the Internet will do anything but grow and grow over that time. Palladium assures that whatever hardware is running on the network of 10 years from now, it will be generating revenue for Microsoft. There is nothing wrong with Microsoft having a survival strategy, but plenty wrong with presenting it as some big favor they are doing for us and for the world.

— Robert X. Cringely, I Told You So: Alas, a Couple of Bob's Dire Predictions Have Come True, [14]

John Lettice on The Register wrote:

Given the way Microsoft ordinarily ships 100 million of whatever it wants to ship, we'd expect the company to continue thumping the security and privacy tubs for all they're worth, to start rolling it out around Longhorn time, and to evolve towards making it, and the chips, virtually compulsory through the good offices of Intel, AMD and the major PC companies. This will only work if the publicity campaign to reposition DRM as A Good Thing convinces the users, and that's by no means a given. We haven't even got on to the trustworthiness of the people who'll be keeping custody of your secure digital identity, for starters. Not yet...

— John Lettice, MS DRM OS, retagged ‘secure OS’ to ship with Longhorn?, [19]

Thomas Greene, also of The Register, wrote:

So to validate Harry, and to update his Master Data File -- two bits of business integral to the Palladium scheme -- I'll need hardware, an OS and a server compliant with Redmond specs. Now MS says they're going to make the sources to the core of this technology open. But considering Microsoft's white-knuckled terror of Linux and open source products in general, combined with its established penchant for mining its products with hidden little pissers for the competition, I don't think it's paranoid to imagine that I may have to turn to a packaged product from a major MS partner/collaborator or a Linux distributor who's gone to the bother of obtaining certs for the kernel and the apps. But either way we'll have major GPL problems, as we'll see below. Indeed, this is going to be something of a reductio ad absurdum.

This certification scheme will rip the guts out of the GPL. That is, the minute I begin tinkering with my software, my ability to interface with the Great PKI in the Sky will be broken. I'll have a Linux box with a GPL, all right; but if I exercise the license in any meaningful way I'll render my system 'unauthorized for Palladium' and lose business. So instead, I imagine I'll be turning to my vendor for support, updates, modifications and patches. And I'll be dependent on them for support services at whatever price they can wheedle out of me because I dare not lose my Palladium authorization. I wonder if the cost of ownership of an open-source system will actually be lower than the cost of a proprietary system under such circumstances.

— Thomas C. Greene, MS to eradicate GPL, hence Linux, [15]

Bruce Schneier wrote:

Lots of information about Pd will emanate from Redmond over the next few years, some of it true and some of it not. Things will change, and then change again. The final system may not look anything like what we’ve seen to date. This is normal, and to be expected, but when you continue to read about Pd, be sure to keep several things in mind.

1. A “trusted” computer does not mean a computer that is trustworthy. The DoD’s definition of a trusted system is one that can break your security policy; i.e., a system that you are forced to trust because you have no choice. Pd will have trusted features; the jury is still out as to whether or not they are trustworthy.

2. When you think about a secure computer, the first question you should ask is: “Secure for whom?” Microsoft has said that Pd allows the computer-owner to prevent others from putting their own secure areas on the computer. But really, what is the likelihood of that really happening? The NSA will be able to buy Pd-enabled computers and secure them from all outside influence. I doubt that you or I could, and still enjoy the richness of the Internet. Microsoft really doesn’t care about what you think; they care about what the RIAA and the MPAA think. Microsoft can’t afford to have the media companies not make their content available on Microsoft platforms, and they will do what they can to accommodate them. There’s often a large gulf between what you can get in theory — which is what Microsoft is stressing in their Pd discussions — and what you will be able to have in practice. This is where the primary danger lies.

3. Like everything else Microsoft produces, Pd will have security holes large enough to drive a truck through. Lots of them. And the ones that are in hardware will be much harder to fix. Be sure to separate the Microsoft PR hype about the promise of Pd from the actual reality of Pd 1.0.

4. Pay attention to the antitrust angle. I guarantee you that Microsoft believes Pd is a way to extend its market share, not to increase competition.

There’s a lot of good stuff in Pd, and a lot I like about it. There’s also a lot I don’t like, and am scared of. My fear is that Pd will lead us down a road where our computers are no longer our computers, but are instead owned by a variety of factions and companies all looking for a piece of our wallet. To the extent that Pd facilitates that reality, it’s bad for society. I don’t mind companies selling, renting, or licensing things to me, but the loss of the power, reach, and flexibility of the computer is too great a price to pay.

— Bruce Schneier, Crypto-Gram, [63]

Robert Lemos on CNET wrote:

Richard Stallman, founder of the Free Software Foundation and of the GNU project for creating free versions of key Unix programs, lampooned the technology in a recent column as "treacherous computing."

"Large media corporations, together with computer companies such as Microsoft and Intel, are planning to make your computer obey them instead of you," he wrote. "Proprietary programs have included malicious features before, but this plan would make it universal."

He and others, such as Cambridge University professor Ross Anderson, argue that the intention of so-called trusted computing is to block data from consumers and other PC users, not from attackers. The main goal of such technology, they say, is "digital-rights management," or the control of copyrighted content. Under today's laws, copyright owners maintain control over content even when it resides on someone else's PC--but many activists are challenging that authority.

— Robert Lemos, Trust or treachery?, [64]

Stallman wrote about "Palladium":

The technical idea underlying treacherous computing is that the computer includes a digital encryption and signature device, and the keys are kept secret from you. Proprietary programs will use this device to control which other programs you can run, which documents or data you can access, and what programs you can pass them to. These programs will continually download new authorization rules through the Internet, and impose those rules automatically on your work. If you don't allow your computer to obtain the new rules periodically from the Internet, some capabilities will automatically cease to function.

Of course, Hollywood and the record companies plan to use treacherous computing for Digital Restrictions Management (DRM), so that downloaded videos and music can be played only on one specified computer. Sharing will be entirely impossible, at least using the authorized files that you would get from those companies. You, the public, ought to have both the freedom and the ability to share these things. (I expect that someone will find a way to produce unencrypted versions, and to upload and share them, so DRM will not entirely succeed, but that is no excuse for the system.)

— Richard Stallman, Can You Trust Your Computer?, [20]

Ross Anderson made a set of frequently asked questions on NGSCB, which was reported on BBC News,[65] The Register,[66] and CNET.[64] He described the platform, under the term, "TC" as:

1. What is TC - this `trusted computing' business?

The Trusted Computing Group (TCG) is an alliance of Microsoft, Intel, IBM, HP and AMD which promotes a standard for a `more secure' PC. Their definition of `security' is controversial; machines built according to their specification will be more trustworthy from the point of view of software vendors and the content industry, but will be less trustworthy from the point of view of their owners. In effect, the TCG specification will transfer the ultimate control of your PC from you to whoever wrote the software it happens to be running. (Yes, even more so than at present.)

The TCG project is known by a number of names. `Trusted computing' was the original one, and is still used by IBM, while Microsoft calls it `trustworthy computing' and the Free Software Foundation calls it `treacherous computing'. Hereafter I'll just call it TC, which you can pronounce according to taste. Other names you may see include TCPA (TCG's name before it incorporated), Palladium (the old Microsoft name for the version due to ship in 2004) and NGSCB (the new Microsoft name). Intel has just started calling it `safer computing'. Many observers believe that this confusion is deliberate - the promoters want to deflect attention from what TC actually does.

2. What does TC do, in ordinary English?

TC provides a computing platform on which you can't tamper with the application software, and where these applications can communicate securely with their authors and with each other. The original motivation was digital rights management (DRM): Disney will be able to sell you DVDs that will decrypt and run on a TC platform, but which you won't be able to copy. The music industry will be able to sell you music downloads that you won't be able to swap. They will be able to sell you CDs that you'll only be able to play three times, or only on your birthday. All sorts of new marketing possibilities will open up.

TC will also make it much harder for you to run unlicensed software. In the first version of TC, pirate software could be detected and deleted remotely. Since then, Microsoft has sometimes denied that it intended TC to do this, but at WEIS 2003 a senior Microsoft manager refused to deny that fighting piracy was a goal: `Helping people to run stolen software just isn't our aim in life', he said. The mechanisms now proposed are more subtle, though. TC will protect application software registration mechanisms, so that unlicensed software will be locked out of the new ecology. Furthermore, TC apps will work better with other TC apps, so people will get less value from old non-TC apps (including pirate apps). Also, some TC apps may reject data from old apps whose serial numbers have been blacklisted. If Microsoft believes that your copy of Office is a pirate copy, and your local government moves to TC, then the documents you file with them may be unreadable. TC will also make it easier for people to rent software rather than buy it; and if you stop paying the rent, then not only does the software stop working but so may the files it created. So if you stop paying for upgrades to Media Player, you may lose access to all the songs you bought using it.

For years, Bill Gates has dreamed of finding a way to make the Chinese pay for software: TC looks like being the answer to his prayer.

There are many other possibilities. Governments will be able to arrange things so that all Word documents created on civil servants' PCs are `born classified' and can't be leaked electronically to journalists. Auction sites might insist that you use trusted proxy software for bidding, so that you can't bid tactically at the auction. Cheating at computer games could be made more difficult.

There are some gotchas too. For example, TC can support remote censorship. In its simplest form, applications may be designed to delete pirated music under remote control. For example, if a protected song is extracted from a hacked TC platform and made available on the web as an MP3 file, then TC-compliant media player software may detect it using a watermark, report it, and be instructed remotely to delete it (as well as all other material that came through that platform). This business model, called traitor tracing, has been researched extensively by Microsoft (and others). In general, digital objects created using TC systems remain under the control of their creators, rather than under the control of the person who owns the machine on which they happen to be stored (as at present). So someone who writes a paper that a court decides is defamatory can be compelled to censor it - and the software company that wrote the word processor could be ordered to do the deletion if she refuses. Given such possibilities, we can expect TC to be used to suppress everything from pornography to writings that criticise political leaders.

The gotcha for businesses is that your software suppliers can make it much harder for you to switch to their competitors' products. At a simple level, Word could encrypt all your documents using keys that only Microsoft products have access to; this would mean that you could only read them using Microsoft products, not with any competing word processor. Such blatant lock-in might be prohibited by the competition authorities, but there are subtler lock-in strategies that are much harder to regulate.

— Ross Anderson, `Trusted Computing' Frequently Asked Questions, [17]

Microsoft response

In a list of frequently-asked questions about NGSCB, Microsoft wrote:

Q: I have heard that NGSCB will force people to run only Microsoft-approved software.

A: This is simply not true. The nexus-aware security chip (the SSC) and other NGSCB features are not involved in the boot process of the operating system or in its decision to load an application that does not use the nexus. Because the nexus is not involved in the boot process, it cannot block an operating system or drivers or any nexus-unaware PC application from running. Only the user decides what nexus-aware applications get to run. Anyone can write an application to take advantage of new APIs that call to the nexus and related components without notifying Microsoft or getting Microsoft's approval.

It will be possible, of course, to write applications that require access to nexus-aware services in order to run. Such an application could implement access policies that would require some type of cryptographically signed license or certificate before running. However, the application itself would enforce that policy and this would not impact other nexus-aware applications. The nexus and NCAs isolate applications from each other, so it is not possible for an individual nexus-aware application to prevent another one from running.

— Microsoft, Microsoft Next-Generation Secure Computing Base - Technical FAQ, [28]

Microsoft also discussed DRM and NGSCB:

NGSCB is not DRM. The NGSCB architecture encompasses significant enhancements to the overall PC ecosystem, adding a layer of security that does not exist today. Thus, DRM applications can be developed on systems that are built under the NGSCB architecture. The operating system and hardware changes introduced by NGSCB offer a way to isolate applications (to avoid snooping and modification by other software) and store secrets for them while ensuring that only software trusted by the person granting access to the content or service has access to the enabling secrets. A DRM system can take advantage of this environment to help ensure that content is obtained and used only in accordance with a mutually understood set of rules.

While NGSCB technology enables DRM-style policy enforcement, it also can ensure that user policies are rigorously enforced on a user's machines. In addition, nexus-aware software can provide a mechanism to ensure that user interactions in unsafe environments (such as the Internet) can be safeguarded by software that the user trusts to protect his or her interests and wishes.

The powerful security primitives of NGSCB offer benefits for DRM providers but, as important, they provide benefits for individual users and for service providers. NGSCB technology can ensure that a virus or other malevolent software (even embedded in the operating system) cannot observe or record the encrypted content, whether the content contains a user's personal data, a company's business records or other forms of digital content.

— Microsoft, Microsoft Next-Generation Secure Computing Base - Technical FAQ, [28]

Michael Aday, Senior Program Manager, Windows Trusted Platform Technologies at Microsoft explained:

PD Misconceptions

- Palladium will censor or disable content without user permission

- As deigned, no such mandatory policy can be in Pd

- Palladium will lock out vendors Microsoft doesn't approve of

- No required Microsoft signatures to use Pd

- Palladium is not controlled by user

- All Pd programs can be run only if authorized by user

- Palladium is "super" virus spreader

- Palladium applications do not run at elevated privilege

- Palladium NCA is not debuggable

- Yes it is. Tag in manifest to turn on debugging.

— Michael Aday, Palladium, [2]

Microsoft also emphasized the "privacy-enabling enhancements" in NGSCB in a November 2003 white paper:

Choice and Control

NGSCB Is Designed to Ship Off by Default

NGSCB is designed to be an opt-in solution. This means computers should be shipped with the NGSCB-specific hardware and software features turned off, disabling the NGSCB functionality completely. (Computer manufacturers may choose to change this default setting to meet specific customer requirements, such as wanting the functionality turned on by default.)

NGSCB Is Designed to Provide Visibility to and Control of Its Operational State

Windows-based PCs with NGSCB will include mechanisms that will give users clear and reliable visibility into the operational state of the NGSCB — specifically, the running nexus.

NGSCB will be designed to enable the machine owner to easily turn NGSCB on and off. This will be accomplished through a software interface (yet to be developed), and it may be complemented by hardware, such as a switch or LED. If the machine owner, for whatever reason, does not want to use NGSCB features, he or she may simply stay with the default setting or turn it off if it is turned on. Returning the computer to the default off setting will disable all NGSCB-specific capabilities. Everything in the standard Windows mode will continue to run as it always has.

NGSCB will be designed to provide users with the ability to see and control information being provided to other parties as part of attestation. This includes the system’s attestation identity keys (AIKs), which comprise the cryptographic representation of the hardware or software state and other integrity metrics, and which can be used to perform anonymous or pseudo-anonymous attestations to remote parties in multiparty transactions.

NGSCB Is Designed to Enable TPM Features to Be Turned Off or On

Just as NGSCB itself must be enabled and can be disabled at any time, the TPM and certain individual features (including access to the TPM’s public key) can also be turned off or on. This will be accomplished through a user interface mechanism yet to be determined that updates the nonvolatile state inside the TPM. Disabled features remain disabled until the machine owner turns them back on.

Anonymity, Pseudo-Anonymity and Unique Information

NGSCB Is Designed to Enable Control of Unique Information

The design of NGSCB is intended to ensure that unique or personally identifiable information will be used only as required to complete a transaction (particularly transactions involving attestation) on terms that the user controls. Through ISV evangelism and other outreach, Microsoft will strongly encourage software developers to build applications that use personally identifiable information in ways that inform users of what is being shared and how it will be used. Such applications should also provide users with an opportunity to stop the transaction before it is completed (in other words, an opt-in model). Microsoft is designing NGSCB to enable the development of programs and systems that will make it easy for machine owners to prevent unauthorized third parties from accessing personally identifiable information (e.g., a credit card number). For example, an NCA could potentially enforce owner-defined policies based on a published privacy protocol (such as P3P)4, under which a merchant privacy policy could be matched against an owner privacy policy before any information is disclosed.

NGSCB Does Not Correlate Users to PCs

NGSCB is designed to authenticate software and hardware. It will not authenticate users. There will be no inherent mechanism in the architecture of NGSCB to correlate the uniqueness of the computer to the identify of the user.

In practice, users may choose to allow third parties to associate them with certain computers. However, this correlation is only present in cases where the user trusts that third party and can authenticate its environment (perhaps by performing an attestation of the third party). Even in this case, there is no inherent correlation between the machine identity and the user identity. An abstraction should always exist between them.

NGSCB Is Designed to Keep the Private Key Private and the Public Key Secure

The TPM will contain a private key that is never accessible to software executing in the operating system. It is used only to instantiate the NGSCB environment and to provide services to the nexus.

In an NGSCB-capable computer, even the public key on the TPM (also referred to as platform credentials) will be secured against accidental disclosure or unauthorized access. The public key will be accessible only by software that the machine owner explicitly trusts (trust being established by the user taking overt action to run this software). Trusted software can then implement policies as determined by the machine owner. These policies control access to the computer's public key by other clients, servers or services.

In contrast to most public key infrastructure systems, the public key in an NGSCB-capable system will not be made widely available. This design was implemented to prevent indiscriminate tracking of users or computers on the Internet through their public keys.

When and how keys will be generated on the TPM will be dependent on how the TPM is manufactured. The manufacturer should have the option to either enable the TPM to generate its own unique keys, in which case the private key is never exposed to anyone or anything, or to place keys directly into the TPM, in which case the manufacturer (or others) could potentially escrow the keys (a situation that could pose either a threat or a value-add opportunity for customers, depending on the trustworthiness of the manufacturer).5

NGSCB Is Designed to Limit Unwanted Exposure of Unique Information

Because the public key component inside each TPM is unique, NGSCB will be designed to protect the public key from exposure against the user’s wishes. This is necessary because a remote party could use the uniqueness property of the public key to turn the public key itself into a machine identifier. To defend against such uses, NGSCB will protect the public key and ensure that it is only revealed to remote parties as part of protocols to establish anonymous or pseudo-anonymous keys and identities for the TPM.6 Moreover, NGSCB should provide machine owners with control over any unique keys that they create.

NGSCB’s design is intended to protect unique keys and credentials in a manner that is consistent with Microsoft’s corporate privacy statement (http://www.microsoft.com/info/privacy.htm). In fact, the NGSCB architecture should enable the development of programs and services that enforce the tenets of the Microsoft privacy statement. For example, online commerce applications utilizing NGSCB architecture could be designed so that personal information such as a name or credit card number could be collected and used only on terms that the user explicitly authorizes. As noted, however, NGSCB is not limited to this or any other policy. Microsoft wants to empower customers with NGSCB to build and enforce their own policies.

NGSCB is intended to ensure that the TPM’s endorsement key (EK) is protected and used only for the creation of “alias” keys, such as attestation identity keys , that enable anonymity in authenticated transactions. Moreover, NGSCB should provide machine owners with control over any unique keys that they create.

— Microsoft, Privacy-Enabling Enhancements in the Next-Generation Secure Computing Base, [9]>

Aftermath

In July 2008, Peter Biddle stated that the negative perception or reception was the main contributing factor responsible for the cancellation of NGSCB.[3]

In 2015, Richard Stallman, founder of the Free Software Foundation, wrote about the Trusted Platform Module, which NGSCB would have required:

As of 2015, treacherous computing has been implemented for PCs in the form of the “Trusted Platform Module”; however, for practical reasons, the TPM has proved a total failure for the goal of providing a platform for remote attestation to verify Digital Restrictions Management. Thus, companies implement DRM using other methods. At present, “Trusted Platform Modules” are not being used for DRM at all, and there are reasons to think that it will not be feasible to use them for DRM. Ironically, this means that the only current uses of the “Trusted Platform Modules” are the innocent secondary uses—for instance, to verify that no one has surreptitiously changed the system in a computer.

Therefore, we conclude that the “Trusted Platform Modules” available for PCs are not dangerous, and there is no reason not to include one in a computer or support it in system software.

This does not mean that everything is rosy. Other hardware systems for blocking the owner of a computer from changing the software in it are in use in some ARM PCs as well as processors in portable phones, cars, TVs and other devices, and these are fully as bad as we expected.

This also does not mean that remote attestation is harmless. If ever a device succeeds in implementing that, it will be a grave threat to users' freedom. The current “Trusted Platform Module” is harmless only because it failed in the attempt to make remote attestation feasible. We must not presume that all future attempts will fail too.

— Richard Stallman, Can You Trust Your Computer?, [20]

Architecture

- Note: this section discusses NGSCB before WinHEC 2004.

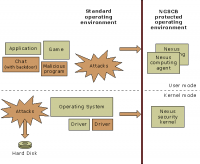

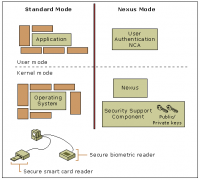

NGSCB would have divided the computing environment into two separate and distinct operating modes.[9] Thus, it would have been composed of two parts: the traditional "left-hand side" (LHS) and the “right-hand side” (RHS) security system. The LHS and RHS would have been a logical, but physically enforced, division or partitioning of the computer.[10]

The LHS would have been composed of traditional applications such as Microsoft Office,[10] along with a conventional operating system, such as Windows.[10][9] Drivers, viruses, and any software with minor exceptions would also have run on the LHS. However, the new hardware memory controller would not have allowed certain "bad" behaviors: for example, code which copied all of memory from one location to the next, or which put the CPU into real mode.[4][5] Another term for the LHS is standard mode.[9]

Meanwhile, the RHS would have worked in conjunction with the LHS system and the central processing unit (CPU). With NGSCB, applications would have run in a protected memory space that is highly resistant to software tampering and interference.[10] (See Strong process isolation). The RHS[10] or nexus mode[9] would have been composed of a “nexus” and trusted agents,[10] called Nexus Computing Agents.[9] The RHS would have also comprised a security support component that would have used a public key infrastructure key pair along with encryption functions to provide a secure state.[10] Other terms for the RHS are the nexus mode or the isolated execution space.[9]

Hardware would have created this secure space.[4] Thus, creating a nexus would have required modification of the CPU, the memory controller or chipset, the keyboard, the video graphics card, and the graphics adapters; and the addition of a new part called the trusted platform module (TPM). The TPM would be permanently attached to the motherboard and could not be removed. However, the TPM would have been shipped with its functionality disabled, making NGSCB an opt-in system. Users could independently choose to disable all TPM functionality, effectively disabling NGSCB.[67] (See Trusted Plaform Module for more information.)

Typically, there would have been one chipset in the computer that both the LHS and RHS would have used.[9] The LHS and RHS would have also shared hardware resources, including the CPU, RAM, and some input/output (I/O) devices.[68] The RHS was required not to rely on LHS for security. If adversarial LHS code were present, NGSCB was required to not leak secrets. However, the RHS was required to rely on the LHS for stability and services. NGSCB would not have run in the absence of LHS cooperation.[4][5] NGSCB needed the following from the LHS:

What NGSCB Needs From The LHS

- Basic OS services - scheduler

- Device Driver work for Trusted Input / Video

- Memory Management additions to allow nexus to participate in memory pressure and paging decisions

- User mode debugger additions to allow debugging of agents (explained later)

- Window Manager coordination

- Nexus Manager Device driver (nexusmgr.sys)

- NGSCB management software and services

— Brandon Baker, A Technical Introduction to NGSCB, [4]

NGSCB would not have changed the device driver model, resorting to secure reuse of LHS driver stacks whenever possible (i.e., RHS encrypted channel through LHS unprotected account). NGSCB would have needed very minimal access to real hardware. Every line of privileged code was considered a potential security risk. Therefore, there would have been no third-party code nor kernel-mode plug-ins.[4]

The nexus would have halted and exited upon receiving an authorized request to stop from the standard side,[67] or LHS. All nexuses would halt, whether through this process or because of a system exception, clearing all nexus and NCA memory.[67]

"FIG. 2 is a block diagram of an existing NGSCB system having both trusted and untrusted environments" (Source: US7530103B2 patent)[10] Note how the RHS and LHS correspond to the WinHEC 2003 diagram.

"Interaction Between Applications, Operating Systems, and Hardware Devices on an NGSCB-capable Computer" (Source: Microsoft)[11]

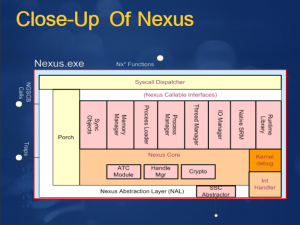

Nexus

The nexus, previously referred to as the "Nub"[6] or "Trusted Operating Root"[69][70] would have hosted, protected, and controlled NCAs.[9] It would have provided NCAs with security services so that NCAs could have provided users with trustworthy computing.[4] The nexus would have isolated trusted agents, managed communications to and from trusted agents, and cryptographically sealed stored data (e.g., stored in a hard disk drive). More particularly, the nexus would have executed in kernel mode in trusted space (see Strong process isolation) and provided basic services to trusted agents, such as the establishment of the process mechanisms for communicating with trusted agents and other applications,[10] otherwise known as an interprocess communication (IPC) mechanism, memory mapping, thread management. The IPC would have provided communication channels among and between NCAs and other programs that were not trusted and that were operating on the same computer or on different computers.[67]

The nexus would have also provided special trust services such as attestation of a hardware/software platform or execution environment and the sealing and unsealing of secrets.[10] The nexus would have stored one or more secrets (private keys and symmetric keys) that it would have only provided to the cryptographically-identified NCA running on a specific hardware platform.[67] Simply stated, the nexus would have offered services to store cryptographic keys and encrypt and decrypt information,[11] and it would have identified[11] and cryptographically[67] authenticated NCAs.[11]

The nexus was also intended to have controlled access to trusted applications and resources by using a security reference monitor, which would have been part of the nexus security kernel.[11]

The nexus would have managed all essential NGSCB services, including memory management, exclusive access to device memory and secure input and output, and access to any non-NGSCB system services.[11]

The nexus was described either as a kernel,[4][68][11] like a kernel,[4] a “high assurance” operating system,[10] not a complete operating system,[11] as an operating system component, or as a secure system component.[9]

The nexus was required to be small so that every NCA owner could, in principle, examine and trust the implementation of the nexus. To keep the nexus small, it was required to meet the following design criteria:

- Contain code that could be duplicated among NCAs;

- Multiplex its time among NCAs.

- Use the hardware Sealed storage and Attestation functions to store keys on behalf of NCAs, and attest to the combined nexus-NCA software stack more flexibly than would be possible with the hardware primitives. This would have allowed the hardware primitives to be simple and inexpensive, while allowing the NCA primitives to be much more flexible and extensible without sacrificing security.

- Provide (at each NCA’s request) standard management tasks, such as key migration between nexus versions and NCA versions.[67]

In order to minimize its size, the nexus would have implemented only the operating system services, such as access control and memory isolation, that would have been necessary to preserve its integrity. Beyond these components, the nexus and its trusted applications would have relied on services provided by the main operating system, such as the physical storage of data,[11] opening communication channels to identified standard processes, or performing I/O operations that are not trusted, such as hard-disk access.[67] Typically, the nexus would have cryptographically protected this data before exposing it to the main operating system.[11]

The nexus could have booted any time, shut down when not needed, and restarted later.[4][5] Nexus startup would have been atomic and protected in a controlled initial state.[4] The nexus would have been authenticated during computer startup. After authentication, the nexus would have created the protected operating environment within Windows. Programs could then request that the nexus perform trusted services such as starting an NCA.[11]

The nexus could have provided encryption technology to authenticate and protect data that would be entered, stored, communicated, or displayed on the computer, and to help ensure that the data would not be accessed by other applications and hardware devices.[68][11] (For more detail, see Strong process isolation.)

The nexus would have provided a limited set of application programming interfaces (APIs) and services for trusted applications, including sealed storage and attestation functions. The set of nexus system components was chosen to guarantee the confidentiality and integrity of the nexus data, even when the nexus encountered malicious behavior from the main operating system.[11]

NGSCB would have allowed a PC to run one nexus at a time.[9] The hardware would have loaded any nexus, but only one at a time. Each nexus would have gotten the same services. The hardware would have kept nexus secrets separate. Nothing about this architecture would have prevented any nexus from running.[4]

NGSCB would have enforced policy but would not have had set the policy. The owner could have therefore controlled which nexuses would be allowed to run.[4][67] This meant they could run any nexus, or write their own and run it, on the hardware. That nexus could only report the attestation provided by the TPM. As Baker put it, "The TPM won't lie". The nexus would not have been able to pretend to be another nexus. Other systems would need to decide if they trust the new derived nexus. Users would just have needed to prove to others that their derivative is legitimate.[4] Users could have also independently chosen to specify nexuses that could access the public key certificate for the TPM, to name nexuses that would have had access to PKSeal and PKUnSeal (thereby enabling the Attestation function in NGSCB), and to name nexuses that would have been authorized to change the foregoing selections. A user could perform these actions through a secure user interface presented by the computer early in the startup sequence, but not through standard software which could be subject to a software attack.[67]

The nexus might permit all applications to run, or a machine owner might configure a machine policy in which the nexus permits only certain agents to run. In other words, the nexus would run any agent that the machine owner tells it to run. The machine owner might also tell the nexus what not to run.[11] Alternatively stated, users could have run any agent, or could have written their own, and run it on the nexus. That agent could report the attestation provided by the nexus. "The nexus won’t lie," according to Baker. The agent could not pretend to be another agent. Other systems would need to decide if they trusted the new derived nexus. Users would just have needed to prove to others that their derivative is legitimate.[4]

On the software side, Microsoft would have built a nexus designed to complement Windows, and expected other developers and vendors to build nexuses of their own.[9] The Microsoft nexus would run any agent. The platform owner could set policy that limits this. The owner could pick some other delegated evaluator if they choose.[4]

Microsoft pledged to make the source code of the nexus available for public review,[67] so that it could be evaluated and validated by third parties for both security and privacy considerations.[71]

Nexus Computing Agents

Nexus Computing Agents (NCAs),[9] or trusted agents,[10] would have been application processes strictly managed by the nexus. They would have consisted of user-mode code executing within the isolated execution space (nexus mode),[9] also known as the protected operating environment.[68][11] They would have been trusted software components, hosted by the nexus. NCAs would have been used to process data and transactions in curtained memory.[68][11]

An NCA could have been be an application in and of itself, or an NCA could have been part of an application that would have also run in the standard Windows environment.[9] In other words, an NCA or trusted agent could have been a program, a part of a program, or a service that runs in user mode in trusted space.[10] Each NCA would have had access only to the memory allocated to it by the nexus. This memory would not have been shared with other processes on the system, unless explicitly allowed by the NCA.[9]

The NCA would have been represented by a signed[5] extensible markup language (XML) document called a manifest.[67] The manifest would have identified the program hash either by naming it directly in the manifest or by naming a public key. This public key would have meant, informally, “Any cryptographic hash signed by the corresponding private key has the same software identity.” In addition, the manifest would have defined the code modules that can be loaded for each NCA, associated human-readable names with the program, noted version numbers, and expressed program-debugging policy[67] (i.e., whether the NCA was debuggable).[2] Debugging an agent really meant debugging via the LHS shadow process.[5]

The manifest would have also provided information about an application that a machine user would have used to determine if the app should run, and would have defined agent components, agent properties (e.g. system requirements and descriptive properties such as the version number), and agent policy requests. The machine owner's policy might, however, have overridden the policy requests in the manifest.[5]

NCAs would have been monolithic, with no DLLs. Code could have been shared using statically-linked libraries. The composition of NCAs would have been based on IPC, which was blocking and message-oriented. NCAs and LHS processes could have both used IPC: for NCAs to communicate with other NCAs; for LHS applications to communicate with the NCAs they start. Access to IPCs would have been controlled by policy.[5]

An NCA or trusted agent would have called,[10] or could have made requests to,[11] the nexus for security-related services and critical general services[10] or essential NGSCB services,[11] such as memory management.[10] An NCA or trusted agent would have been able to store secrets using sealed storage, and to authenticate itself using the attestation services of the nexus. Each trusted agent or entity would have controlled its own domain of trust, and they need not have relied on each other.[10] Alternatively stated, each NCA would have controlled its own trust relationships, and NCAs would not have needed to trust or rely on each other.[11]

Each NCA was required to operate without forcing the computer to restart, shut down running programs, or cause compatibility problems for existing programs. This would have allowed NCAs to run without restricting the other software currently running, or could potentially run, on a computer.[67]

The nexus would have determined the unique code identity of each NCA, which would have enabled the NCA to be specifically designated as trusted or not trusted by multiple entities, such as the user, IT department, a merchant, or a vendor. The mechanism for identifying code in NGSCB would have been unambiguous and policy-independent.[11]

NCAs could have been written in C or C++, using any compiler. Agents could have been instantiated from managed or unmanaged code. An RHS CLR was planned; it would have allowed agents to be written in any .Net language.[5]

Projection would have been the mechanism by which some of the powers and properties of NCAs or trusted agents could be extended to LHS code (ex. Excel). Rather than porting an application (or mortal) on the LHS, projection would have allowed the construction of a monitoring agent (or angel) for the application. This would have permitted the existing application to run with many of the same useful properties as a trusted agent. Projection might have also been applied to both the LHS operating system and to any LHS application programs for which some level of trusted operation was desirable. Projection might have also been applied to LHS device drivers. Thus, projection would have allowed trusted agents to protect, assure, attest, and extend, LHS operating systems, services, and programs.[10]

Projection would have been the mechanism by which some of the powers and properties of NCAs or trusted agents could be extended to LHS code (ex. Excel). Rather than porting an application (or mortal) on the LHS, projection would have allowed the construction of a monitoring agent (or angel) for the application. This would have permitted the existing application to run with many of the same useful properties as a trusted agent. Projection might have also been applied to both the LHS operating system (through a base monitoring agent or archangel) and to any LHS application programs for which some level of trusted operation was desirable. Projection might have also been applied to LHS device drivers. Thus, projection would have allowed trusted agents to protect, assure, attest, and extend, LHS operating systems, services, and programs.[10]

NCAs were divided into three categories: "Application," "Component," and "Trusted Service Provider."[72]

Application agents would have been stand-alone applications, and good for clients in multi-tier applications, such as an online banking client. The entire application would have run on the RHS.[5]

Component agents would have been components of a larger application. Most of the application would have run on the LHS, but agents would have been used for specific trusted applications. Component agents would have been suitable for adding trusted features to existing Windows applications, such as a document signing component of a word processor.[5]

Trusted Service Provider NCAs would have run entirely in Nexus mode and would have provided services to other NCAs. For example, the Trusted UI Engine, which would have rendered and managed the UI of an NCA, and would have alerted such NCA when events occur on active UI elements, would have been an example of a Trusted Service Provider NCA.[72]

Features

- Note: this section discusses NGSCB before WinHEC 2004.

Developers could have used four main capabilities to protect data against software attacks on NGSCB systems: strong process isolation, sealed storage, secure paths to and from the user,[9] also called secure input and output,[10] and attestation.[9] All NGSCB-enabled application capabilities would have been built off of these four key features[4][5] or pillars.[5] The first three would have been needed to protect against malicious code,[4][5] while attestation would have broken new ground in distributed computing.[5]

Strong process isolation

Strong process isolation would have been a mechanism for protecting data in memory. It would have been created and maintained by the nexus and enforced by NGSCB hardware[9][5] and software.[4][5] The hardware would have notified the nexus of certain operations, and the nexus would have arbitrated page tables, control registers, and others.[4][5]

Agents and the nexus would have run in curtained memory,[2][4][5] inaccessible to other agents, to the standard Windows kernel,[4][5] nor to hardware direct memory access[11] (DMA) devices,[4][5] by using a special structure called the DMA exclusion vector.[11]

Strong process isolation would have provided an execution and memory space,[9] a trusted space, by carving out a secure area (the RHS).[10] This space would have been a specific portion of RAM within the address space.[11] This space would have been protected from external access and software-based attacks (even those launched from the kernel),[9] and would have provided a restricted and protected address space for applications and services that have higher security requirements.[11] This curtained memory would have guaranteed against leaking information to the world outside of the nexus, and would have permitted only certain certified applications to execute under the nexus and to access the curtained memory.[10]

Operations that run on the RHS would have been protected and isolated from the LHS, which would have made them significantly more secure from attack.[10] In other words, strong process isolation would have prevented rogue applications from changing NGSCB data or code while it was running.[4][5]

Because of strong process isolation and curtained memory, the main operating system would have been largely unaware of the NGSCB system. The nexus could be started at any time through authenticated startup, which enables hardware and software components to authenticate themselves within the system. After the nexus was authenticated, the nexus and its trusted applications would be protected in isolated memory and could not be accessed by the main operating system.[11] The nexus was required not to interact with the main operating system in any way that would allow events happening at the main operating system to compromise the behavior of the nexus.[10] Thus, this protected operating environment provided a higher level of secure processing while leaving the rest of the computer's hardware and software unaffected.[11]

In user interface terms, when users run trusted applications within this curtained memory, all processes would operate within special "trusted windows." A non-writable banner with a trusted icon and program name would appear on the top of each trusted window. The trusted window could not be covered by other windows for programs that were running in the standard operating system environment. If more than one trusted window is open on the desktop, they would not overlap.[11]

Sealed storage

Sealed storage would have been a mechanism for protecting data in storage.[9] It would have allowed the user to encrypt information[10] with a key rooted in the hardware,[4][5] so that it could only be accessed by a trustworthy application,[10] the designated trusted entity that stored it,[9] or by authenticated entities.[4][5]

NGSCB would have provided sealed data storage by using a special security support component (SSC). The SSC would have provided the nexus with individualized encryption services to manage the cryptographic keys, including the NGSCB public and private key pairs and the Advanced Encryption Standard (AES) key from which keys would have been derived for trusted applications and services.[11] Each nexus would have generated a random keyset on first load. The TPM chip on the motherboard would have protected the nexus keyset.[4][5] An NCA would have used these derived keys for data encryption.[11] Simply put, agents would have used nexus facilities to seal (encrypt and sign) private data. The nexus would have protected the key from any other agent/application, and the hardware would have prevented any other nexus from gaining access to the key.[4][5] File system operations by the standard operating system would have provided the storage services.[11]

The trustworthy application could have included just the application that created the information in the first place, or any application that was trusted by the application that owned the data. Therefore, sealed storage would have allowed a program to store secrets that could not be retrieved by nontrusted programs, such as a virus or Trojan horse,[10] and sealed storage would have prevented rogue applications from getting at encrypted data.[4][5] In addition, sealed storage could not be read if another operating system is started or if the hard disk is moved to another computer. NGSCB would have provided mechanisms for backing up data and for migrating secure information to other computers.[11]

Sealed storage would have also verified the integrity of data when unsealing it.[4][5]

Sealed storage would have bound long-lived confidential information to code. Long-lived information referred to confidential information for which the lifetime of the information would have exceeded the lifetime of the process that accesses it. For example, a banking application might need to store confidential banking records for use at a later time, or a browser might need to store user credentials on the hard disk and protect that data from tampering.[11]

Encrypted files would have been useless if stolen or surreptitiously copied.[11]

Secure paths to and from the user

Secure paths to and from the user would have been mechanisms for protecting data moving from input devices to the NCAs, and from NCAs to the monitor screen.[9] NGSCB would have supported secure input through upgraded keyboards and Universal Serial Bus (USB) devices,[4][68][11][5] allowing a local user at a local keyboard or other device to communicate privately with an NCA[68] or a trusted application.[11] Other protected input devices included mice, and integrated input for laptops.[4][5]

Data entered by the user and presented to the user could not be read by software, such as spyware or “Trojan horses,”[9] programs that could read keystrokes, or allowed a remote user or program to act as a legitimate local user.[68] Malicious software could not mimic or intercept input, or intercept, obscure or alter output;[9] in the keyboard setting, malicious software could not be used to record, steal or modify keystrokes[10] Secure path would have enabled one to be sure that they are dealing with the real user, not an application spoofing the user.[4][5]

Secure output would have been similar.[10] Graphic adapters were generally optimized for performance rather than security, allowing software to read or write to video memory easily and making securing video very difficult.[11] A secure channel would have existed between the nexus and the graphics adapter.[4][5] The information that would have appeared onscreen could be presented to the user so that no one else could intercept it and read it. Taken together, these things would have allowed a user to know with a high degree of confidence that the software in his computer is doing what it was supposed to do.[10] NGSCB dialog boxes could be obscured, and they had visual cues that allowed users to be certain that the window was not being displayed by the standard side[67] or the LHS.

A two-factor authentication combination of NGSCB-enabled smart cards and biometric devices could have been combined with the trustworthy computing capabilities of an NGSCB system to provide the strongest user authentication at reasonable cost. This combination of NGSCB and authentication input devices would have addressed the human factor problem involved in password credentials, and the hijacking scenario in case of biometrics authentication.[68] NGSCB-enabled devices would have been attached to the system, and the user authentication software component would have run as an NCA in the protected operating environment.[68]

NGSCB would have added the following secure input capabilities to two-factor authentication to provide the strongest possible user authentication:

- Integrity: The NGSCB system verifies that user authentication information was not modified after it was submitted. For example, the system verifies that another entity did not substitute different information.

- Confidentiality: The NGSCB system maintains the security of the user authentication information by ensuring that no other entity can read the information.

- Authentication: The NGSCB system verifies that the user authentication information it receives is submitted by secure input devices. No other entity could have sent the information.

— Microsoft, Secure User Authentication for the Next-Generation Secure Computing Base, [68]

Smart cards, biometrics, and other authentication input devices could have been made trustworthy by embedding an input security support component into the device or into the hub to which the device connects. When these devices would be plugged into the computer, and the NGSCB system was turned on, the system could have determined whether the devices were secure and could set up a path for the exchange of authentication information between the devices and the user authentication software component NCA that would be running in the computer's protected operating environment.[68]

Attestation

Attestation would have been a mechanism for authenticating a given software and hardware configuration, either locally or remotely.[9] Attestation referred to the ability of a piece of code to digitally sign or otherwise attest to a piece of data and further assure the recipient that the data was constructed by an unforgeable, cryptographically identified software stack.[10][11] It would have enabled a user to verify that they were dealing with an application and machine configuration they trusted.[5]

Attestation would have let other computers know that a computer was really the computer it claimed to be, and was running the software it claimed to be running.[10] It would have based on secrets rooted in hardware combined with cryptographic representations (hash vectors) of the nexus and/or software running on the machine. Attestation would have been a core feature for enabling many of the privacy benefits in NGSCB.[9] Because NGSCB software and hardware would have been cryptographically verifiable to the user and to other computers, programs, and services, the system could verify that other computers and processes were trustworthy before engaging them or sharing information. Thus, when used in conjunction with certification and licensing infrastructure,[11] attestation would allowed the user to reveal selected characteristics of the operating environment to external requestors,[10][11] and to prove to remote service providers that the hardware and software stack is legitimate. By authenticating themselves to remote entities, trusted applications could have created, verified, and maintained a security perimeter that would not have required trusted administrators or authorities.[11]

For example, a banking company might have provided NGSCB-capable computers to its high-profile customers to help provide secure remote access and processing for Internet banking transactions that contain highly sensitive and valuable information. The banking company would have then built its own NGSCB-trusted application that would have used a secure network protocol, enabling the customers to communicate with a server application on the company's servers.[11]

When requested by an agent, the nexus could have prepared a chain that authenticated: the agent by digest, signed by the nexus; the nexus by digest, signed by the TPM; and the TPM by public key, signed by the OEM or the IT department. The machine owner would have set policies to control which forms of attestation each agent or group of agents could use. A secure communications agent would have provided higher-level services to agent developers. It would have opened a secure channel to a service using a secure session key, and responded to an attestation challenge from the service based on user policy.[4][5]

All trust relationships could have been traced back to the "root of trust." Trust relationships were only as strong as their root. For example, if the CA gave away all its secrets to untrustworthy entities, a requestor could potentially download a malicious program without knowing that the trust relationship had been compromised. The NGSCB root of trust would have been made as strong as possible by embedding a 2048-bit RSA public and private key in the SSC that would have stored the shared secrets. The coprocessor's private key could not be accessed; it could only be used to encrypt and decrypt secrets.[11] The computer's RSA private key would have embedded in hardware and never exposed. If computer-specific secrets were somehow accessed by a sophisticated hardware attack, they only applied to data on the compromised computer and could not be used to develop widely deployable programs that could compromise other computers. In the case of an attack, a compromised computer could be identified by IT managers, service providers, and other systems, and then excluded from the network.[11]

Neither nexus nor agent could directly determine if it were running in the secured environment. They would have been required to use Remote Attestation or Sealed Storage to cache credentials or secrets to prove the system is sound.[4]

Attestation would have involved secure authentication of subjects (e.g., software, machines, services)[4][9][5] through code ID.[4][9] This would have been separate from user authentication.[4][9][5]

WinHEC 2004

Biddle announced during his presentation in WinHEC 2004:

The original plans required users to update both their hardware and software.

But Peter Biddle, product unit manager for Microsoft's security business, told delegates at the WinHEC show: "Customers have told us that they require the benefits 'out of the box', without having to write or rewrite applications."

As a result, NGSCB will not shield individual applications but will create 'secure compartments'. The operating system will contain compartments for elements such as the actual operating system, computing tasks and administration and management.

— Tom Sanders, Microsoft shakes up Longhorn security, [51]

Security would still have been first. The same nexus would support both Windows client and server. "Longhorn" would run with or without NGSCB. NGSCB would have more direct support for Windows (e.g., “Cornerstone”), and would have been more closely aligned to Windows components (e.g., compartments). Isolation would have been provided per compartment, rather than per process.[49]

System services would be provided to the operating system in the system-only compartment. An IPC mechanism would have been used to call them. The same services described in Features would still be provided, namely, isolation, sealed storage, trusted path, and attestation. TPM 1.2 would have been used to root sealed storage.[49]

The nexus would have managed sufficient hardware to provide useful isolation, including the CPU, memory, and TPM (crypto processor). The secure compartment would have managed secure I/O. The primary operating system would have managed all other hardware.[49]

"Cornerstone" would have prevented a thief who booted another operating system or ran a hacking tool from breaking core Windows protections, and provided a root key which could be used by third-parties to protect their secrets against the same attack. User login and authentication would have been done in a secure compartment. Meanwhile, under Code Integrity Rooting (CIR), boot and system files would have been validated and their integrity checked prior to the release of the SYSKEY into the legacy operating system.[49]

The Integrated Security Support Component intended for Secure input could have protected against both software and hardware attacks, but required new keyboards, new mice, user retraining, among other costs, which would have been out of proportion to the problems that most users will face. It was scrapped in favor of Intel’s Trusted Mobile Keyboard Controller for mobile devices, and work to get changes for USB in chipsets without requiring new USB devices nor new drivers.[73]

Comparison between NGSCB as described in WinHEC 2003 versus WinHEC 2004 (Source: Peter N. Biddle, Microsoft)[49]

Diagram of NGSCB architecture revision shown during WinHEC 2004. (Source: Peter N. Biddle, Microsoft)[49]

Trusted Platform Module

The Trusted Platform Module (TPM) is a cryptographic co-processor specified by the Trusted Computing Group. It contains cryptographic keys, performs basic cryptographic services,[9][74] and stores cryptographic hashes,[9] or platform measurements. The TPM anchors chain of trust for keys, digital certificates and other credentials.[74]

The public-private key pair that is created as part of the TPM’s manufacturing process is called the endorsement key (EK),[9] which is unique, generated only once, and is the root key for establishing the identity used for attestation. It is a 2048-bit RSA key pair. Microsoft claimed that it would not be involved in generating the EK. The TPM owner could enable/disable the access to the EK, thus enabling/disabling attestation.[74]

The private key component of the EK never leaves,[9] and is known only to,[74] the TPM. The private key would never have been accessible to software executing in the operating system. It would have been used only to instantiate the NGSCB environment and to provide services to the nexus.[9]

In an NGSCB-capable computer, even the public key on the TPM (also referred to as platform credentials) would have been secured against accidental disclosure or unauthorized access. The public key component of the EK would have been used by NGSCB only to create “alias” keys (called attestation identity keys (AIKs) in the TPM specification) that could have been used to ensure anonymity. The public key would have been accessible only by software that the machine owner explicitly trusted (trust being established by the user taking overt action to run this software). Trusted software could then implement policies as determined by the machine owner. These policies control access to the computer's public key by other clients, servers or services. In contrast to most public key infrastructure systems, the public key in an NGSCB-capable system would not be made widely available. This design was implemented to prevent indiscriminate tracking of users or computers on the Internet through their public keys.[9] It would have been protected to mitigate against identity profiling and tracking.[74]