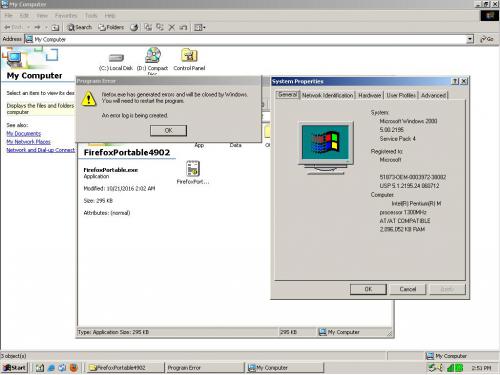

You can check the event viewer to see if there's any additional information that was missed. When Dr. Watson is invoked, it would likely just collect the memory offsets and the loaded process images/modules that were in the space when the crash occurred as it still does today on more recent versions of Windows, even if as you see is not that. Using CFF explorer or similar tools you can see what Visual C++ version the executable is compiled with. Since Firefox is open-source, their changelogs and source code changes are public, if you are keen on looking into what in fact they changed, if not mentioned if the changes were too comprehensive to make sense of. To compare what was changed specifically for the Windows platform between 48 and 49 would be a good start. But making things worse here is that DEC had the first mass-produced 64-bit processor in 1994 and Microsoft in tandem with Intel struggled along for years, trying to make us believe that more memory didn't exist.

48.0 Release Notes

After version 48, SSE2 CPU extensions are going to be required on Windows

(Changed)

49.0 Release Notes

Ended Firefox for Windows support for SSE processors

(Changed)

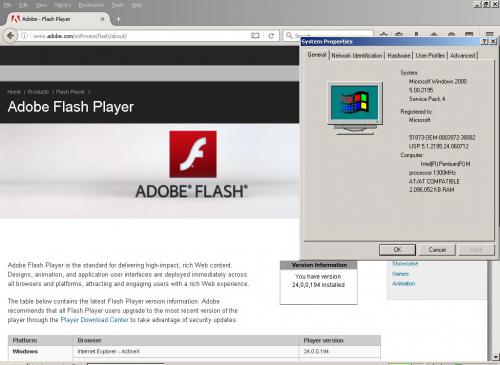

Those were the only changes mentioned this issue as you suggested. But more fundamentally, the question now if that was the only change lies in whether the underlying components affected by this change caused the regression you suggest, rather than to stereotype the application as a whole. I suspect that regardless of if your underlying hardware supports SSE2, Windows 2000 itself had components which were pulled in as a dependency which lacked SSE, which then triggered this regression. SSE2 was released in 2001 and was introduced with the original Pentium 4 as suggested by the Wikipedia article on it.

Windows 2000 was released in 1999, when some processors commonly used at that time lacked SSE(1) were still supported. It is very much plausible to suggest that Microsoft as a result of having to support processors with Windows 2000 as a release which lacked SSE including pre-K6 AMD units had to continue compiling critical components of the operating system without enforcing the support and/or use of these instructions, which has now resulted in the lack of support of runtime availability with the now applications, yet still before working through that environment because at the moment they (Being Mozilla's developers) enforced those hard requirements, every single component involved too had to also start using SSE2, which was mandated with Windows XP SP2 due to the automatic NX-bit enablement then, which necessitated SSE2 and above, which was finally "cured" so long as you had the right CPU at the point you upgraded with a series of patches because if you add it all up, every component of XP SP2 had to then support both outcomes, despite also having to maintain compatibility with regular old SSE instructions with the release as well.

Microsoft for instance

could had kept support for architectures such as Alpha, PowerPC and SGI MIPS using what now is not a big deal except for some space, such as preprocessor definitions, but it was deemed too much bloat, aggravation, storage (Due to the number of configurations and builds) when broadband was a crazy notion unless you were sharing it with a university for work on a publication, such as how Slackware or Debian were able to convince the universities, with subsidy then was only given for mirroring and the like because the institution itself depended on your work succeeding. I will use the term subsidy lightly, because these were times of enlightenment in the computing world, and everyone had their own opinion.

Apple left iTunes and pre-prescott users destitute on XP SP2 while people were still hacking OS X Tiger to (continue to) work on Thinkpad T30's in 2006 when they enforced SSE2 upon innocent bystanders, then forcing SSE3 the next year, despite SSE being released in 2001 and then SSE2 in 2004 which clearly shows how slow progress is made in the PC world. People did though backport, convert, strip, and merge pieces of programs when this switch happened to (try to) make things work when times were changing, especially when the authors refused to adopt patches or to amend their work, even if such was even unreasonable.

Compilers change as advancements are made, including semiconductors and changes of staffing, and it is pretty obvious now that GCC was on 2.95 legacy and 3.x alpha then as well as mentions of Intel and Microsoft's own internal changes with their compilers. The compilers provoke a hard requirement because of lack of interest and ability to support by the developers, and that should not be considered a sign of abandonment. It is likely a recompile of Firefox 49.0 now due to the lack of fundamental changes versus the last should not have caused a fundamental or critical response if a certain effort is applied. I have stated this before a few months ago here and it is strange I have to mention it regarding Nextstep or similar but this is a matter of economics, and reasoning. You should be able to use a drop of a commit between that period and effect a usable build now, but as time goes forward, without fundamental knowledge and willpower, the ability for you to keep up with the changes made will only continue to spiral, such as entropy, until the ability for anyone, even those able or that in fact worked on such a body can no longer keep track of what was changed, given their own humanly ability as we are.

That's why it

really needs XP even though XP is dead and in a domino fashion 2000 has to suffer. Developers get lazy because they have to as they have too many ports, bosses, and goals. As an open-source project, you can sift through logs to determine their recommend compiler and supporting environment, even if this was never actually mentioned by grepping or sifting through the files, wiki or with no wiki. But everything after that is determined by your own passion to start learning and to make sense of the mistakes that were made so others can then appreciate the work you then perfect, rather than to blame crash logs and stack traces hoping somebody cares today. We all care, but those that knew have moved on. Using such an old system for any production is really a question of perseverance.

With Visual Studio/C++ .NET or 2005 installed, you should/may be able to do a binary-only breakpoint and have it catch the exception, which can provide you the loaded module that threw the exception which was caught with Watson. I would do it but I cleaned house on all my virtual machines when I upgraded disks. 2005 is mentioned as the last to support 2000 and targeting NT 4.0. [1]

[1]

Visual Studio 2005